Advanced Physics → Advanced Thermal Physics → Statistical Interpretation of Entropy

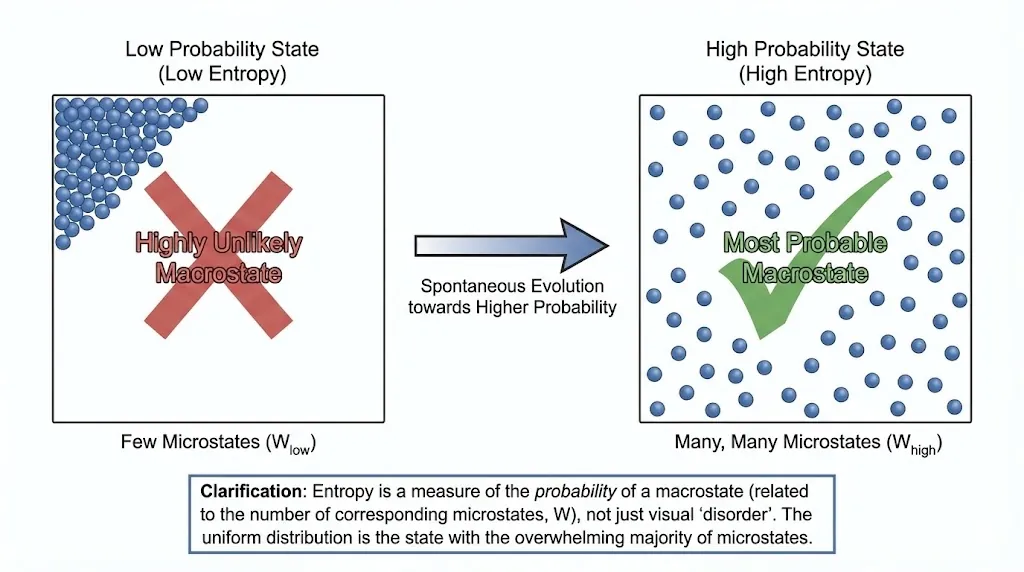

Entropy is a measure of probability — not visual disorder.

1. Why a Statistical Interpretation Was Needed

Classical thermodynamics defines entropy through heat and temperature, but this definition does not explain why entropy increases.

To understand irreversibility at a deeper level, a microscopic description of matter is required.

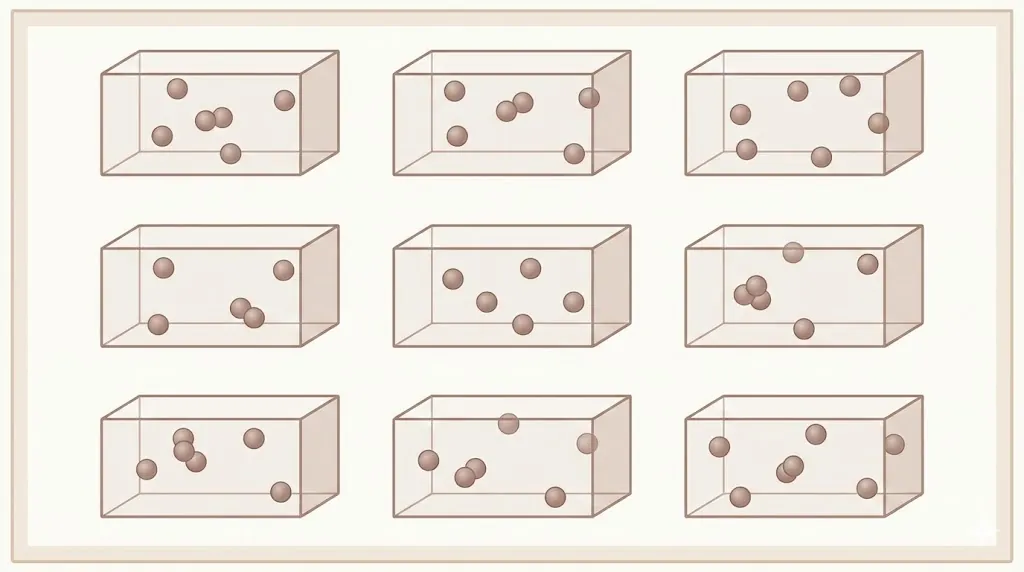

2. Macrostates and Microstates

A macrostate is defined by macroscopic variables such as pressure, volume, and temperature.

A microstate specifies the exact positions and momenta of all molecules.

3. Probability and Entropy

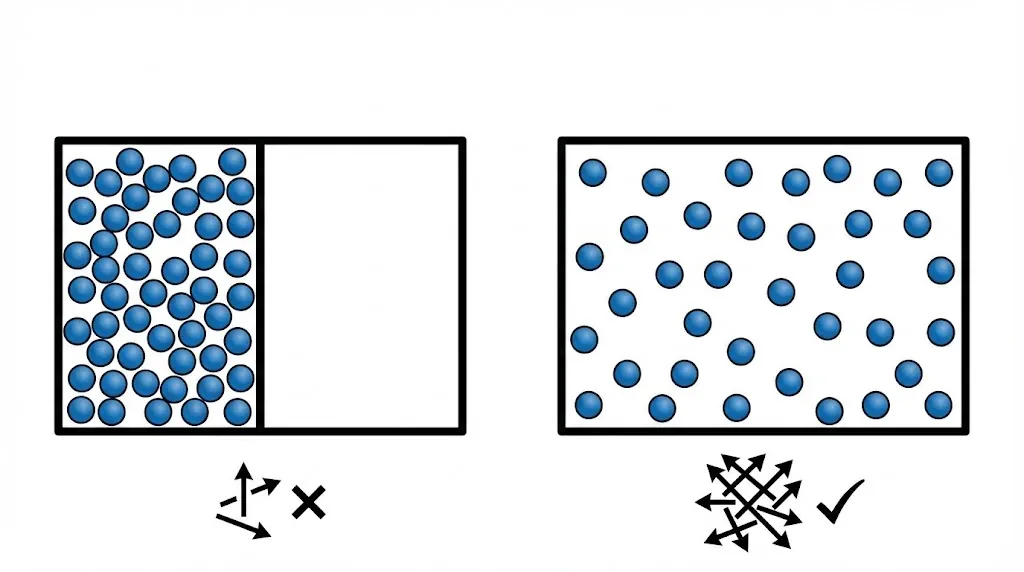

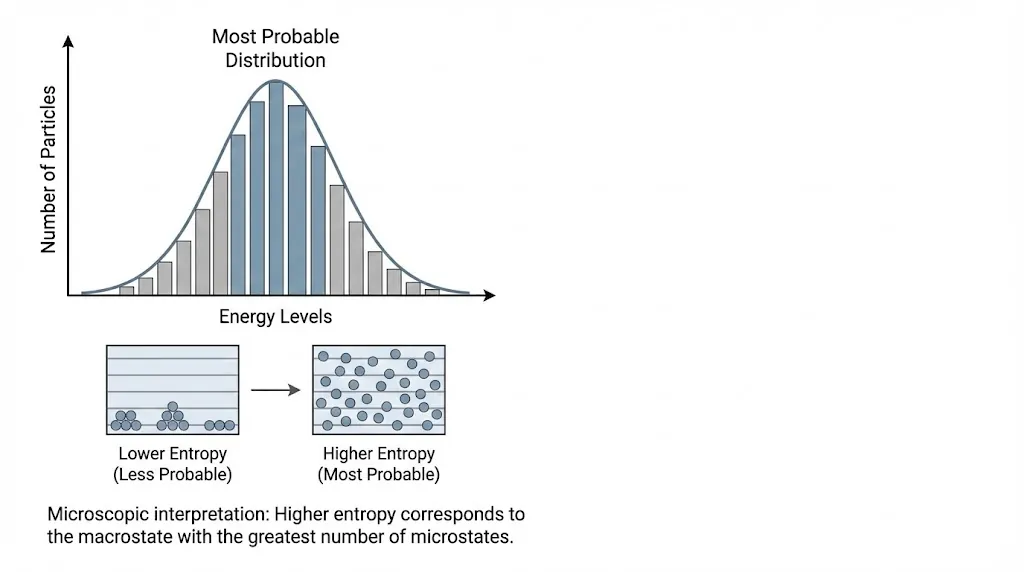

Systems naturally evolve toward macrostates with the largest number of microstates.

Such macrostates are overwhelmingly more probable.

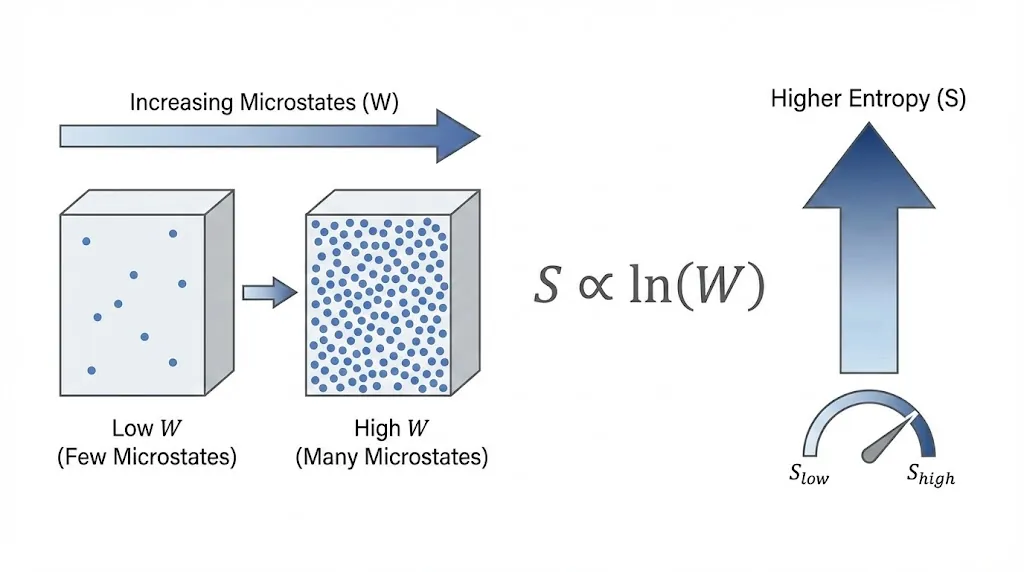

4. Boltzmann Relation

The fundamental link between entropy and microstates is given by:

![]()

Here:

- S = entropy

- k = Boltzmann constant

- W = number of accessible microstates

5. Most Probable Distribution

When particles distribute themselves among energy levels, the observed distribution corresponds to the most probable arrangement.

6. Entropy Is Not Just Disorder

Entropy is often described as disorder, but this is only a rough analogy.

A better interpretation is:

7. Connection to the Second Law

The second law emerges naturally from statistics.

Systems move toward macrostates with overwhelmingly larger numbers of microstates, making entropy increase practically inevitable.